Co-authored by Thijs de Vries and Walter van der Scheer

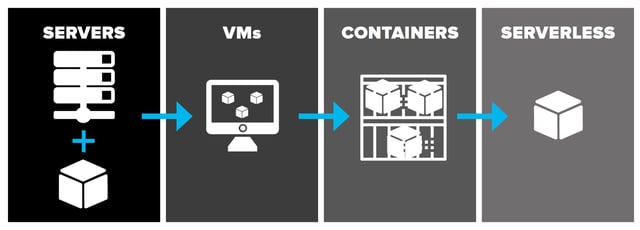

We’re moving towards a serverless future, one where applications are developed in the cloud. The serverless approach promises to solve many of the issues of traditional (cloud) hosting while providing the latest technological functions against infinite scale and unprecedented low cost. In this article, we explain the concept of serverless, what it is, and how it works.

What is Serverless?

Serverless simply means that you can store data and execute functions on a cloud platform whenever you want to, while the cloud provider auto-scales the process to your needs. You never over- or under-provision capacity and the cloud provider securely takes care of the servers that execute the functions.

Storage and Compute

Cloud hosting took away a lot of the maintenance and installation burden that came with hosting applications on machines in your own racks. The concept of serverless takes installing, maintaining, and updating software and applications to a whole new level of efficiency and effectiveness. Serverless is extremely efficient, as it’s designed to handle peak-performance by offering infinite scale.

With serverless, there is no need to install software or services on your own machines. You simply access a function of a cloud provider that runs that specific service, as an end-point or event-driven when it’s part of a process (like automatically resizing an image when a user uploads a profile picture). In only a few milliseconds or less, the cloud provider makes sure that machines are made available to run the function for you. Besides storage, there is also an increasing number of serverless compute services which offer proven technology and functions that are unique to a cloud provider.

The Latest Technology Available On-Demand

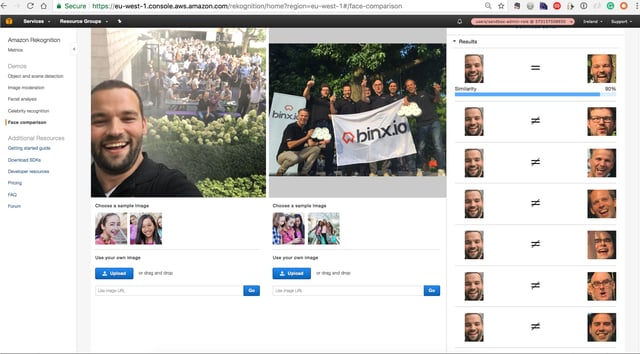

An example of unique functions that are available serverless are image recognition services. Algorithms that are used to recognize subjects in images are trained by the cloud provider and made available as a serverless function. Let’s look at an example. We took a selfie picture to train the service to learn to recognize a specific person.

The goal was to recognize this person in other images, like the group picture below.

We fed both images into the Amazon service Rekognition. Immediately, it gave us the result with a 90% similarity match. All other people in the picture were recognized as being “other” people.

Your Own Functions - Serverless

Of course, it’s great that it’s possible to use what a cloud provider has already built. But it’s also possible to use serverless compute services to run your own code in response to events. Naturally, the compute service automatically manages the underlying compute resources for you. In this way, you can extend other cloud services with custom logic, or create back-end services that operate at serverless scale, performance, and security. In Amazon, this service is called Lambda. Google offers a comparable service with Google Cloud Functions, as does Microsoft Azure with Functions.

These custom functions enable engineers to build apps faster with a serverless architecture. Ultimately, this leads to an accelerated development process, with an event-driven, serverless compute experience, while you pay only for the resources you consume.

Serverless vs Containers

So, does all of this mean that the era of containers is entirely over? No, it doesn't, because serverless functions are not always the best solution.

The Predictability of a Process

The choice of serverless versus containers can be most easily made by looking at the way that functions are being used.

Serverless functions are ideal for spiky functions that don’t rely on speed completely, while containers are the right option for mission-critical functions with predictable processes.

If functions have not been used for some time, they can be shut down. When you then access that endpoint, it takes some time (think milliseconds) for the system to boot, which is called a cold start in computing. When your system has predictable processes, provisioning the right number of servers and using containers is arguably the best option. In this set-up, there is very little over-capacity, yet the services are always available. Still, in many applications, the combination of containers and serverless is unbeatable. Let’s say that you offer a service that allows consumers to purchase concert tickets. A customer "queue" must be able to handle extreme spikes in traffic, e.g., when Imagine Dragons or Taylor Swift (two of the top ten artists in the Billboard 100) are in town. Serverless functions develop this queue. Then, when the user has passed through it to the ticket selection and payment process, traffic becomes predictable. In this scenario, containers would work like a charm.

The Serverless Future

By using serverless functions, engineers can build apps faster with a serverless architecture. Ultimately, this leads to an accelerated development process, with an event-driven, serverless compute experience, where organizations only pay for the resources they consume. The on-demand availability of functions is ideal for processes with fluctuating usage, as well as for functions that don’t rely completely on speed.

This article is part of the Urgent Future report Cloud - It's a Golden Age.