The Evolution of ChatGPT

ChatGPT is based on the GPT (Generative Pre-trained Transformer) architecture, which was first introduced by OpenAI in 2018. The GPT model was trained on a massive dataset of text, and it was able to generate highly realistic and human-like text. The model was able to understand and respond to context, and it was able to generate text in a wide range of styles and formats.

Following the success of GPT, OpenAI began to develop a version of the model specifically for conversational applications, which led to the development of ChatGPT. The model was trained on a large dataset of conversational text, allowing it to understand the nuances of language and respond in a way that is appropriate for the context of the conversation. This required a significant amount of training data and a powerful architecture to handle the complexity of natural language. The model also needed to be able to generate a wide range of responses, including text, images, and even audio. This required a flexible and versatile architecture that could handle multitude of inputs and outputs.

So, what is ChatGPT?

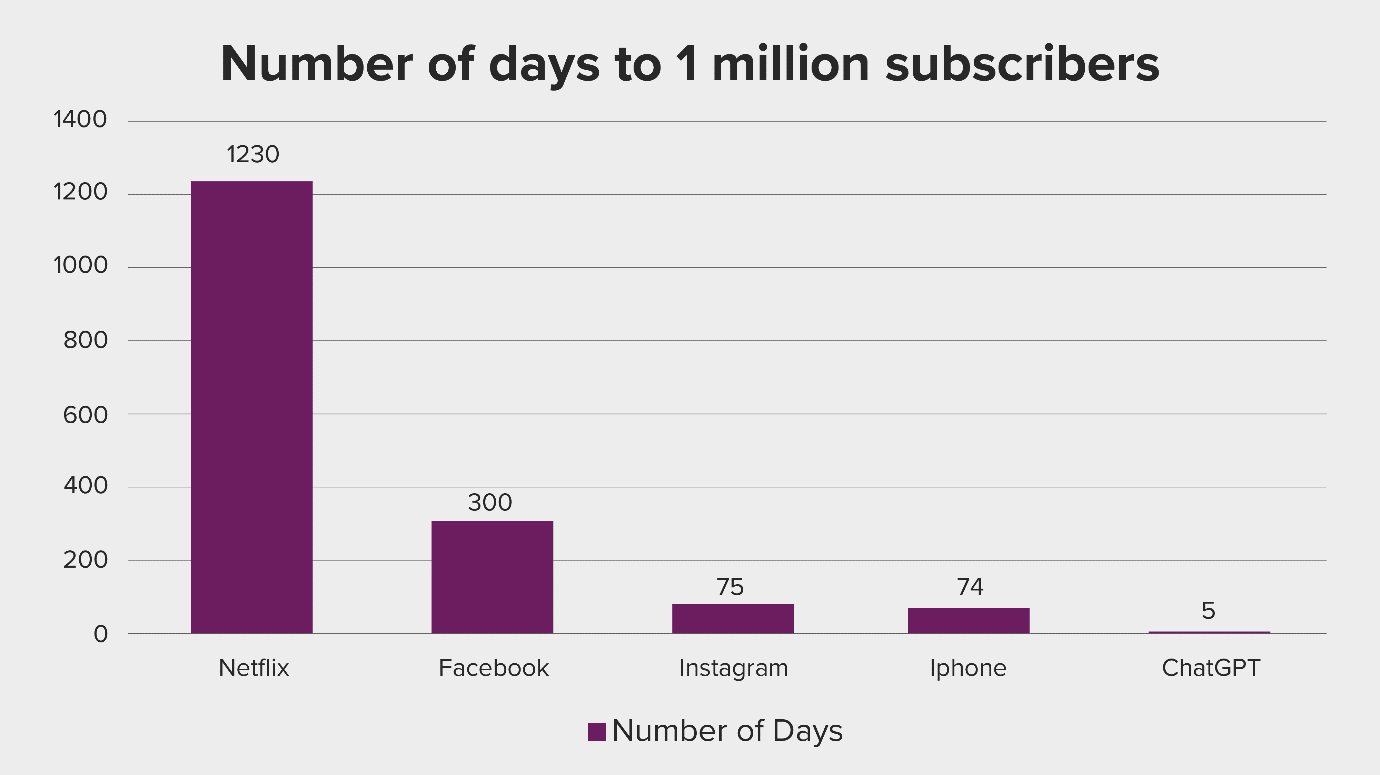

ChatGPT has clearly proven one thing. The ability to ask open ended questions about any topic and receive a response that is not hand coded has a huge market. The numbers suggest that there’s an unlimited demand for the capability that it provides. It took ChatGPT just five days to reach more than 1 million subscribers, since it was launched on November 30th, 2022. Compare that with the other platforms shown below and the time they took to reach the 1 million milestone. Also, it reached its $29B valuation within six weeks of its launch.

Source: It’s time to pay attention to AI

Source: It’s time to pay attention to AI

It can be applied to a variety of use cases, including:

- Text Generation: To generate text that resembles a given input. This can be useful for tasks such as writing creative fiction, generating product descriptions, and more.

- Q&A: To answer questions based on a given context. This can be useful for tasks such as creating chatbots or information retrieval systems.

- Language Translation: To translate text from one language to another. This can be useful for tasks such as machine translation or creating multilingual chatbots.

- Fine Tuning and Pre-training: For a specific task or an industrial application. Once the model is provided with a dataset and a task specific objective, it will adjust its parameters to optimize for that objective.

But what’s the value add? It's still a bot!

That’s true; it is a chatbot with conversational capability and provides human like interactions. While it seems similar to “Hey Siri”, “Alexa” and “Hello Google”, it’s the most advanced Natural Language Processing model that currently exists. It's trained on 175B parameters, using the data available on the internet. It uses a special technique for Reinforcement Learning and incorporating user feedback, which is an enhancement from the earlier release of ChatGPT3.

So, what’s the underlying architecture?

As mentioned above, the technology architecture for ChatGPT is based on the transformer architecture, a type of neural network that is particularly well-suited for processing sequential data such as text. The transformer architecture was first introduced in 2017 in the paper "Attention Is All You Need" by Google researchers.

In the case of ChatGPT, the model is composed of multiple layers of transformer blocks, with each block consisting of multi-head self-attention mechanisms and feed-forward neural networks. The self-attention mechanism allows the model to weigh the importance of different parts of the input when making predictions, while the feed-forward neural networks process the input and make predictions.

The architecture of ChatGPT also contains an encoder that is responsible for understanding the input text and a decoder that generates the output text. The encoder and decoder are connected through a multi-head attention mechanism which allows the decoder to attend over the encoder's output representations and generate an output token one after another.

The model is pre-trained on a massive dataset of internet text and fine-tuned on focused tasks using task-specific data. The fine-tuning process adjusts the model's parameters to better fit the specific task at hand, allowing it to generate more accurate and relevant responses.

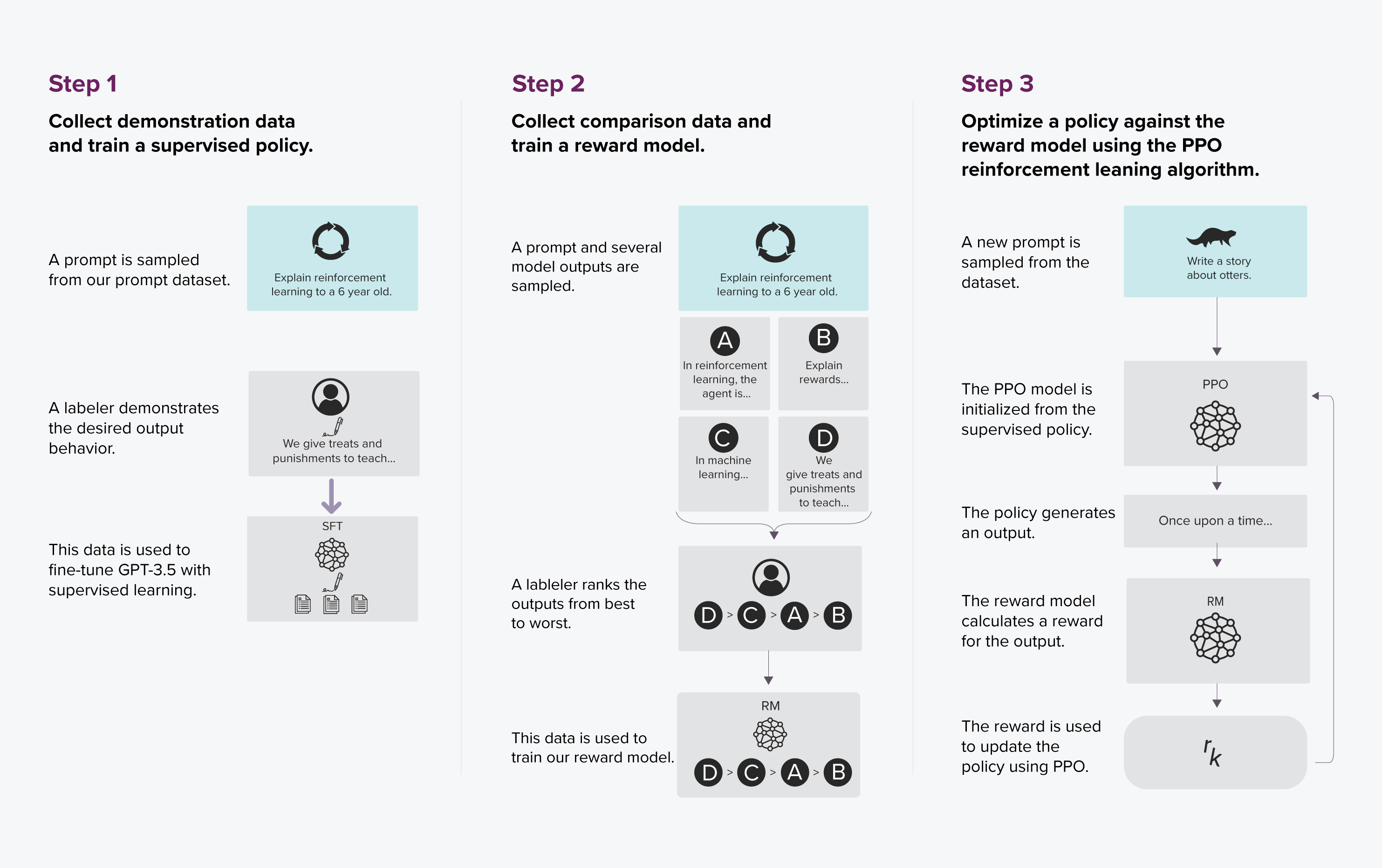

It follows the Supervised Reinforcement Learning architecture framework. This involves the output of the engine being ranked from best to worst by humans. Based on this feedback, the AI improves and is digitally rewarded. Repeating the process of fine tuning and rerunning helps to produce a smaller but more efficient model, as compared to GPT3.

Source: ChatGPT Architecture

Source: ChatGPT Architecture

So, can it do everything?

Well, the short answer is NO. This is a great tool to have as it can help enterprises in a variety of ways. Some of the biggest use cases could be around content generation, technical writing and integrating it as a search engine on top of an enterprise repository, to name a few. However, there are certain limitations to be kept in mind. Some of those are:

a. Can’t browse the web live

b. It’s trained on data till 2021

c. Doesn’t give you the list of options to choose from

d. Cannot return images

e. No way to validate the reference source based on which the content is being generated

The question that arises is, if GPT is an enhanced version of the current Alexa and Siri, why can't we have the same on all the mobile devices? The answer is hardware and scale. As per one study, the cost of one AI query is equivalent to 10x to 100x times the cost of a Google query. The one big point which Altman makes is that, while the chatbot is great, it is still built on the historical data. It is not adding any new fundamental knowledge. So technically, there is no net addition to the knowledge, it's not that the chatbot will create a new concept which doesn’t exist.

In addition, there are also concerns raised on the biasness of the tool itself. There is a school of thought which emphasizes on the biasness of the chatbot, saying that this platform could be left biased, mostly because of the platform and the institutions from which it is gathering its information lean towards the “LEFT”. Racism is another such bias where there have been mixed reviews.

In the end, we need to keep in mind that the ChatGPT will not generate content if it has not seen the data as part of its training. For that reason, ChatGPT can sometimes generate biased or inappropriate responses, particularly when its training data includes biases. It may not understand context or background knowledge, which can lead to incorrect or nonsensical responses. It is not capable of understanding the meaning of words and phrases if it has not seen it in the training data.

Can ChatGPT be tuned for industry specific usage?

There are several use cases that demonstrate the capabilities and potential applications of ChatGPT. Some of these include:

- OpenAI's DALL-E: This uses ChatGPT to generate images based on text descriptions, for instance, “a two-story pink house with a white picket fence.”

- OpenAI's GPT-3 for customer service: OpenAI has been working with companies to develop customer service chatbots powered by GPT-3, which boost customer engagement and improve efficiency. This can be customized and fine-tuned for a variety of industries including Banking, Retail, Utilities, to name a few.

- Hugging Face's language correction GPT-3: This is being used for improving the language of the text written by non-native speakers.

How to get started with ChatGPT?

Getting started with the ChatGPT involves a few simple steps:

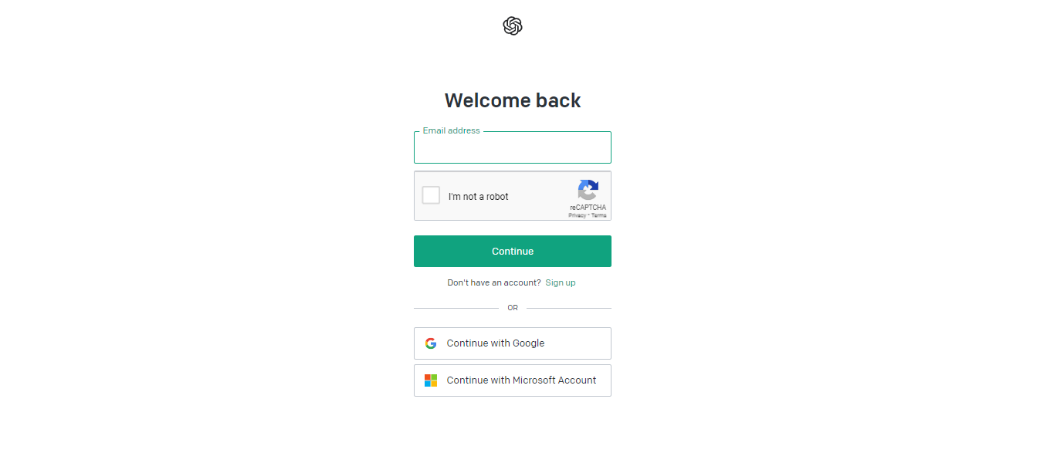

1. Open the link: ChatGPT Architecture and click on the ‘Try ChatGPT’ bar.

2. The following screen will appear. You can either use your existing account to sign in or you can sign up.

3. You can also use your Microsoft or Gmail account to log in.

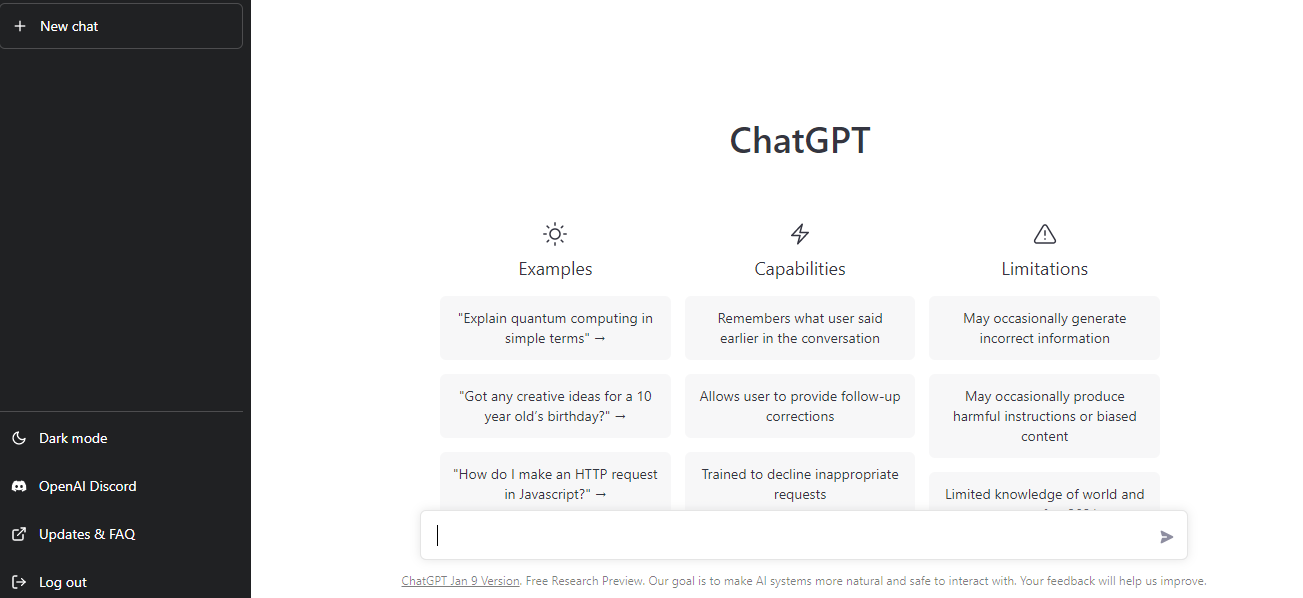

4. Once you’re logged in, you can use ChatGPT by writing in the search bar, or use a new chat bar to write multiple tasks.

Summary:

ChatGPT will undoubtedly transform the industry and is the next best thing to Google. It has given a whole new dimension to Natural Language Processing. Organizations can use this technology to add a lot of value, either for their internal use, or to go to market with a specific product.

As Sam Atlman said in this interview, substantial innovation will come from using these very large models. Organizations will benefit from taking these models and fine tuning them to their organizational needs and industry specific research etc. rather than building them from scratch.