Suppose you discover a great business case for a voice application and now you want to develop one yourself. In this article, Xebia guru Mike Woudenberg shares his experience of using Google Dialogflow—software designed to model voice-interfaces—to do just that.

With Dialogflow, you pay for voice processing as a service. Google takes care of the complexity of voice recognition. And while programming skills are still needed to use the tool, this leaves you free to focus on building whatever user interaction you have in mind. Google handles the Natural Language Processing and continues to develop that technology in the background. You can create a working voice-interface in three easy steps:

Step 1: Train

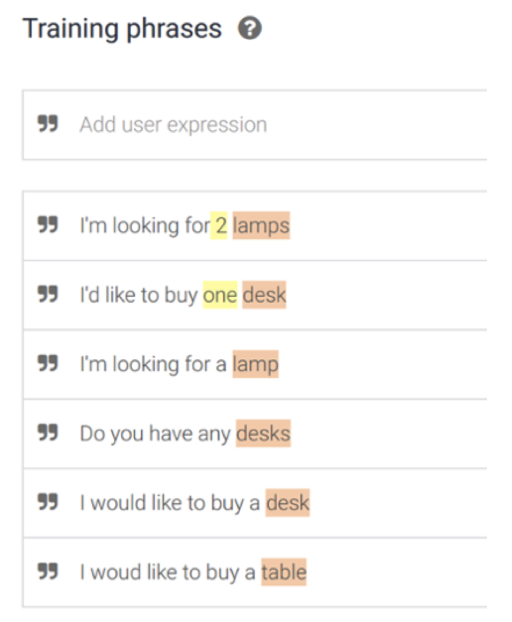

Suppose you have an online store, your customers call hundreds of times a day with questions about order status, and you want Dialogflow to automate this process. Your first step is to have a brainstorming session where you come up with a large number of questions your customers might ask, and you’ll use these to train the system—there’s an example of a home decoration webshop below. The highlighted words in Figure 1 are known to Dialogflow. The system uses these parameters to get to the right answer.

Figure 1. Dialogflow training phrases

Step 2: Test

Next, you’ll test this with real users. Are there any bottlenecks in the process? How often do they occur? You adjust the process accordingly. It’s a fast iterative process, just like a regular design flow. It’s just the interface that’s different, since it’s a voice assistant or agent, in Dialogflow terminology.

Step 3: Take-off

Once everything's been tested, you go live, but that's not the end of development—maintaining your speech solution is an ongoing process. Dialogflow has an interface similar to Google Analytics which shows you where users disengage, what questions they ask, and what answers they provide to your questions. Unexpected results can be included in the training set.

Everything you always wanted to know about Dialogflow…

Few things in life are quite as simple as counting to three. If this were the scenario for a voice-interface, these would be the questions about Dialogflow we suspect our users would ask:

Are there any shortcuts?

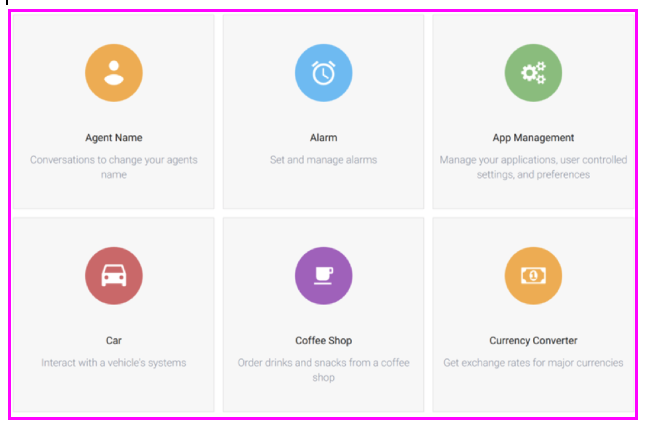

Sure there are. Dialogflow has templates for agents that handle a specific task, such as setting an alarm or ordering drinks at a coffee shop, as shown in Figure 2. You can use these as a quick starting point or as an example for your own automated tasks. On the Dialogflow website, there’s a bunch of manuals to help you on your way.

Figure 2. Dialogflow templates for specific tasks

Where’s the added value?

Dialogflow can provide richer information than just a simple question-answer dialog. It does this using a piece of software called an API that lets applications talk to each other. For example, if your API can access your inventory system, Dialogflow can retrieve information about a specific order status and pass that information on to the conversation partner. Without such integration, you could at best run your FAQs through a chatbot. With APIs, there are lots of additional options.

Is Dialogflow limited to Google services?

Google's handles the Speech recognition and the Natural Language Processing aspect, but the software is not limited to their own speech assistant, it can interface with several other systems such as Facebook Messenger and Slack. Siri is a bit more complicated – what you build on Dialogflow can run iOS apps that in turn call Siri.

Where can customers find my speech assistant?

If your voice-interface is integrated with your existing channels, they will find it by themselves. Right now, there is no App Store for voice applications. On Google Assistant you’ll find a list of assistants and information on what each one does.

Tell me about personalization

There are different ways to customize the dialog with your users. The easiest way to keep track of a user’s identity is to simply ask them in conversation and then integrate their answers. During the conversation, you can collect different variables such as age, how long they’ve been a customer and so on, and then customize the conversation accordingly. So you don't always need an identity in order to personalize, as long as you know something about the user. Going the extra step and linking the user to their customer ID in your order system allows for true contextual communication.

What about privacy?

Voice applications are subject to the same rules as other channels. You need the user's permission to store his data and this process must conform to AVG regulations. Google also requires you to draw up a privacy policy for your assistant. Specific privacy rules apply to data sent to Google's API.

Is Dialogflow a self-learning system?

One of Dialogflow’s greatest strengths is its – continuously improving – recognition capability, but it will not give you answers to previously unknown questions. You have to manually guide this development, based on the user disengagement data.

What are the most common pitfalls of voice applications?

The biggest bottleneck is that the system misinterprets a user’s question. It doesn’t know the right variables or the context of the call is misjudged and the user quits as a result. People have little patience with a voice assistant. If the conversation takes too long, they are likely to switch to an alternative, more efficient channel of communication.

What’s next?

Dialogflow continues to improve in recognizing natural language and expand the number of ways to process them. This is awesome because it makes things like shopping using just your voice much easier. You’re not limited to listing one item at a time, but can order everything in a single sentence and this is where the potential for growth exists. It gets even better when the speech assistant starts recognizing patterns: for weeks, you’ve ordered a box of eggs and a kilo of bananas—do you want to repeat that order this week? This technology can also be used in totally different environments such as health care. A surgeon might have four or five assistants to monitor the patient—one for blood pressure, another for heart rate, and so on. A voice assistant can be a single access point for all this information.